The SBAC math test is one of two tests developed to align with the Common Core national math standards. The other Common Core test is called PARCC.

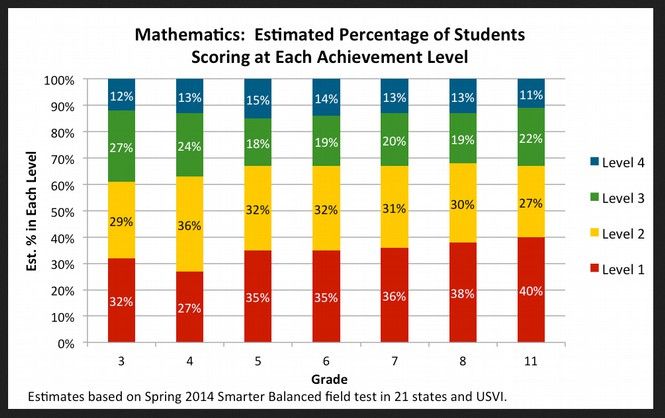

In September 2014, the group that developed the SBAC test announced for the first time that the SBAC test would fail 67% of the students who took the test. Here is a quote from their press release: “Smarter Balanced estimates that the percentage of students who would have scored “Level 3 or higher” in math ranged from 32 percent in Grade 8 to 39 percent in Grade 3. See the chart below for further details.”

http://www.smarterbalanced.org/news/smarter-balanced-states-approve-achievement-level-recommendations/

Here is the chart that came with this press release:

Look at blue and green areas of the far right column and you will see that only 33% of all 11th grade students will score a Level 3 or 4 on the SBAC Math test. This means that 67% of all 11th Graders will be labeled as failures by the SBAC math test.

Legislators and the public have repeatedly asked why the SBAC test was designed to fail 67% of all students. In a moment we will provide the official reason and then explain the real reason. But first, we will describe some of the many extremely unusual characteristics of the SBAC math test.

The SBAC math test is unique in several respects. First, it was paid for with hundreds of millions of dollars in federal “Race to the Top” funds. It is likely the single most expensive – and most profitable - high stakes test ever created. Second, the group that developed this test claims that the math test has over 40,000 possible math questions. Neither the public that paid for the test, nor teachers nor parents are allowed to see these questions. So the fairness of these questions and their alignment or lack of alignment to Common Core standards cannot be independently evaluated. But it would not matter if parents and teachers could see these 40,000 math questions because the SBAC math test varies from student to student. Each student gets a different test based upon their answers to the first few questions. There is no way of telling which questions were given to which students. This makes it impossible to assess the reliability of validity of the nearly infinite number of possible SBAC math tests. As many educational researchers have claimed that the SBAC math test is not reliable or valid, we should take a closer look at what these terms actually mean.

Research on the Lack of Reliability and Validity of High Stakes Tests

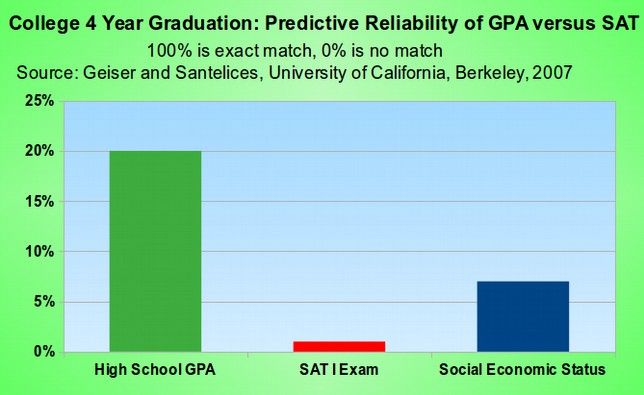

Reliability means whether a test gets consistent results from year to year and test to test. There is also internal reliability which has to do with the consistency of student responses to individual questions and whether these answers are consistent with answers to other questions. Validity means whether a test measures what it claims to measure and how it compares to other assessment methods that are know to be valid. In the case of SBAC, it claims to measure career and college readiness. The reason the SBAC test is not reliable is that there is no way of measuring the consistency of tests or test answers because there are billions of possible tests. The reason the SBAC test is not valid is because it does not actually measure college readiness. In fact, no high stakes test has ever measured college readiness. The only factor that has been shown to consistently measure college readiness is the Grade Point Average of all of the high school courses taken by student.

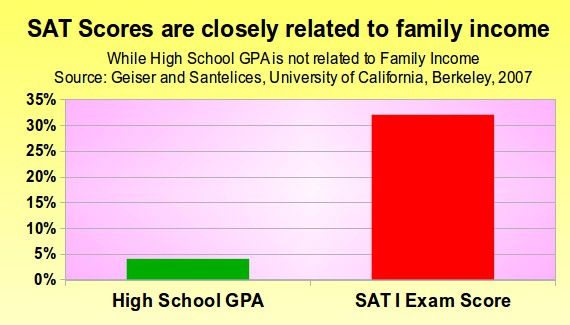

Here is a chart comparing the ability of student grades to predict college graduation versus the ability of the SAT College Entrance test to predict college graduation (based on a 2007 study of more than 81,000 students entering the University of California).

One reason that the SBAC test can not measure college readiness is because, like all high stakes tests, it is not an accurate measuring tool of student achievement. Teachers who spend an entire year assessing students are much more accurate predictors of student achievement that any single high stakes test.

The second reason that the SBAC test is not a valid or reliable measure of college readiness is that, like all high stakes tests, it is biased against certain groups. These groups have been shown in many studies to be low income women and minority students. For example, a 2009 study of students in California comparing years that the California state test was required for graduation to years when it was not required for graduation found that requiring high stakes tests for high school graduation is particularly harmful to minority students by “disproportionately discourage minority students from continuing in high school, without improving achievement for those students or others.” Specifically, the researchers concluded that the women and minorities were much more likely to fail the test and that the California high stakes test reduced the graduation rate of low achieving minorities and women by 19%.

Here is a quote from this study: “We find that these negative effects were concentrated among low-achieving students, minority students, and female students... Low-achieving minority students and girls fail the exit exam at substantially higher rates than otherwise similar white and male students, leading to lower graduation rates under the exit exam requirement. These findings call into question both the effectiveness and the fairness of high school exit exam policies.”

Sean F. Reardon, Allison Atteberry, Nicole Arshan, Michal Kurlaender (2009). Effects of the California High School Exit Exam on Student Persistence, Achievement, and Graduation. Stanford, CA: Stanford University, Institute for Research on Educational Policy and Practice. http://web.stanford.edu/group/cepa/workingpapers/WORKING_PAPER_2009_12.pdf

The third reason that high stakes tests are not valid is that what they really measure is the wealth of the family of the student who is taking the test. This is called the Zip Code effect because wealthy families tend to live in certain zip codes. What high stakes tests are closely related to is the family income of the parents. High school GPA is also fairer to low income students because it is not as closely tied to family income as high stakes single point tests. Here is a chart comparing the ability of high school GPA to measure family income versus the ability of the SAT test to measure family income.

Comparing the SBAC test to the NAEP test

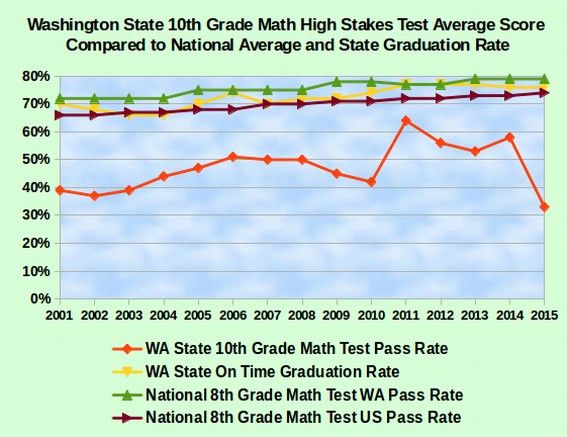

Another way to understand the terms reliability and validity is to compare less reliable high stakes tests to more reliable low stakes tests. The National Assessment of Educational Progress, or the NAEP test, is a low stakes test that has been given in the United States for more than 30 years. It is low stakes because there is no adverse consequences for students who fail the test. Here is a chart comparing the pas rate on the NAEP test to the pass rate on high stakes tests in Washington state and compared to the graduation rate in Washington state over time.

Note that the scores on the NAEP math test have been gradually increasing over time both in Washington state and in the nation and that the pass rate in Washington state has been consistently about 5% above the national average for many years. This makes the NAEP test a reliable test. The fact that the NAEP test also closely tracks the gradually rising graduation rate in Washington state makes the NAEP test a valid test. It is also important to note that no person can declare a test to be reliable or valid. Only actual comparison data can make a test reliable and valid.

By contrast, the Washington state high stakes tests (called the WASL, the MSP, the EOC and the SBAC) have never been reliable or valid. Not only have the cut scores wandered all over the place, driven by political agendas rather than by student achievement, but more important, there is no relationship between the high stakes test passing rates and the graduation rate in Washington state.

Comparing the Meaning of the Terms NAEP Basic to NAEP Proficient

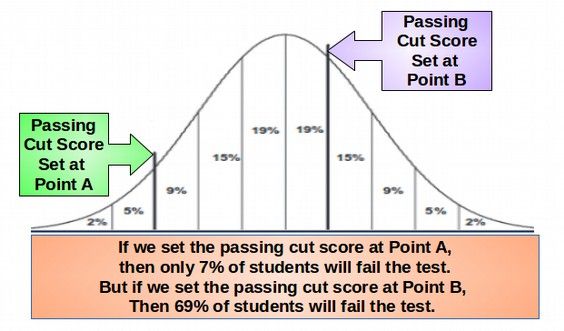

Now that we better understand why the SBAC test can not possibly be reliable or valid or fair either now or in the future, we will look at the terms NAEP Basic and NAEP Proficient as they both play a crucial role in explaining why the SBAC test fails 67% of students who take the test. First, we should recognize that all high stakes tests are essentially ranking systems that group students into various categories. These groups of students are created using cut scores that divide students into groups that we have in the past referred to by using the letter grades A, B, C, D and F.

In reality, student performance typically occurs along a continuous bell shaped curve.

Traditionally, we have set cut scores so that about 10% of these students would get an A, 10% would get a B, 40% would get a C, 20% would get a D and about 20% would get an F and fail the test or fail the course.

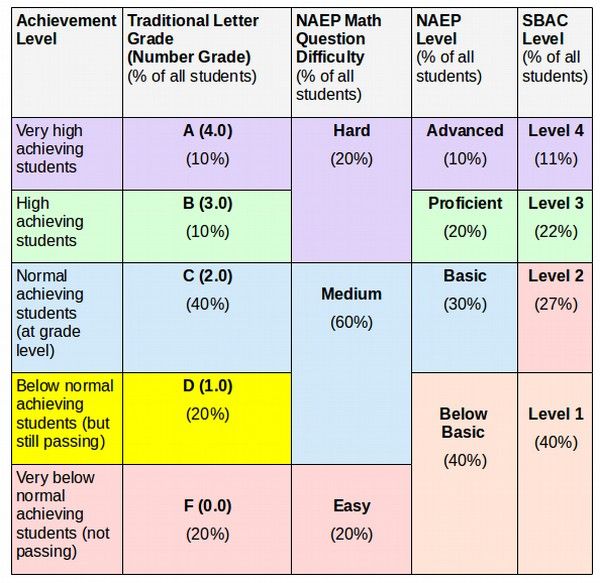

Unfortunately, the SBAC test and NAEP tests use grading systems that are different from the traditional grading system. To make matters even more complex, the NAEP Math test (on which the SBAC math test is loosely based) uses yet another grading or ranking system. It is therefore important to understand how these various grading and ranking systems are related. Below is a table showing these relationship.

You can see from the above table that NAEP Hard Math questions can be answered by students with a letter grade of A or B while NAEP Medium Math questions can be answered by students with a letter grade of C and D. Meanwhile, the NAEP ranking system made a controversial change to the traditional grading system. It changed a D grade which has historically been a passing grade into a failing grade. This meant that to pass the NAEP test, a student needed to be “at grade level” with an average traditional grade of C. This doubled the percent of students who were labeled as failures from 20% to 40% - without any change in the actual performance of the students. The SBAC test went even further than this. SBAC test designers had the audacity to even declare students who are passing with a C grade and are at grade level as failures (SBAC level 2). This increased the percent of students who were labeled as failures from 40% to 67%. This is the surface reason why the SBAC test fails two out of three students who take the test – it is designed to fail even students who are at grade level!

Refuting the Claimed Reasons the SBAC Test Fails 67% of the Students who take the test

Of course, the designers of the SBAC test claim that they have to fail 67% of the students who take the test in order to measure whether students are “career and college ready.” We have already seen that no high stakes test has ever been able to predict whether a student is career and college ready. So the claims of SBAC promoters is pure nonsense. In fact, merely changing standards and adding the SBAC test has no chance of getting more kids career and college ready. It is magical thinking to make this claim. One might as well claim that waving a magic wand will make kids career and college ready.

Another reason that SBAC promoters give for failing two thirds of all students is that the state and students will save money on college remediation courses because some colleges have agreed to let students “skip” entry level courses if they get a Level 3 or 4 on their SBAC test. This plan is also doomed to failure. The last thing students should be doing is skipping important math courses because the got a high score on a single high stakes test. College math courses are completely different than high school math courses and college students are at a different level of brain development than high school students. Encouraging students to skip foundational math courses places them at a greater risk of failing more advanced math courses. It is like trying to save money on building a house by skipping building a solid foundation. You simply risk having the entire house fall down.

The final reason that SBAC promoters give for failing 67% of all students is that we live in a competitive global market and our students need to compete with students in other countries. This argument is also absurd for one very important reason. Washington state students, when adjusted for poverty, are among the highest achieving students in the nation and in the world on national and international math tests. There is no need for them to raise the bar any higher than it already is.

The Real Reason the SBAC Math Test Fails 67 Percent of All Students

Ask yourself these important questions: Why did the SBAC test decide to unfairly label normal achieving at-grade-level students as failures? Why did they make NAEP Basic a failing score rather than a passing score? Why did they make SBAC Level 2 a failing score rather than a passing score? The answer is that the whole point of Common Core and the SBAC test is not to get kids college ready. Instead it is to convince parents and the voters that our public schools are failing so that they will agree to allow our public schools to be privatized and taken over by the billionaires. SBAC and Common Core are weapons of mass deception – designed to create chaos in our public schools – destroying the self esteem of our students and turning them into compliant robots willing to obey the orders of their corporate masters.

What we need is not more rigorous standards and tests. Instead what we need are more living wage jobs so that fewer kids have to grow up under the extreme stress of poverty. This in turn requires a tax structure that does not hand all of the money over to the billionaires. This will not happen until a new group of people are elected. In other words, it is not our students who are to blame for the lack of jobs, it is our corrupt political leaders. The people who need to be held more accountable is not our hard working students and teachers. It is our politicians in Olympia who are failing to fully fund our schools. They are the ones who are failing the real test.